Sharpen the blade and brace for a journey steeped in myth and mystery. WUCHANG: Fallen Feathers has launched in the cloud.

Ride in style with skateboarding legends in Tony Hawk’s Pro Skater 1 + 2, now just a grind away. Or team up with friends to fend off relentless Zed hordes in the pulse-pounding action of Killing Floor 3.

The adventures begin now with nine new games on GeForce NOW this week.

Dive into the hauntingly beautiful world of Ming Dynasty China in WUCHANG: Fallen Feathers, where supernatural forces and corrupted souls lurk around every corner.

Step into the shoes of Wuchang, a fierce warrior battling monstrous enemies and the curse of the feathering phenomenon. Every decision and weapon swing carves a path through a world that’s as treacherous as it is breathtaking. The game’s rich lore and atmospheric storytelling pull players into a dark, soulslike adventure where danger and discovery go hand in hand.

Stream the game in all its gothic glory with GeForce NOW, no legendary hardware needed. Powered by a GeForce RTX gaming rig in the cloud, GeForce NOW enables members to stream instantly across devices and immerse themselves in epic combat and stunning visuals. Ultimate members can soar to new heights and stream at up to 4K resolution 120 frames per second or 240 fps for even smoother frame rates — all at ultralow latency.

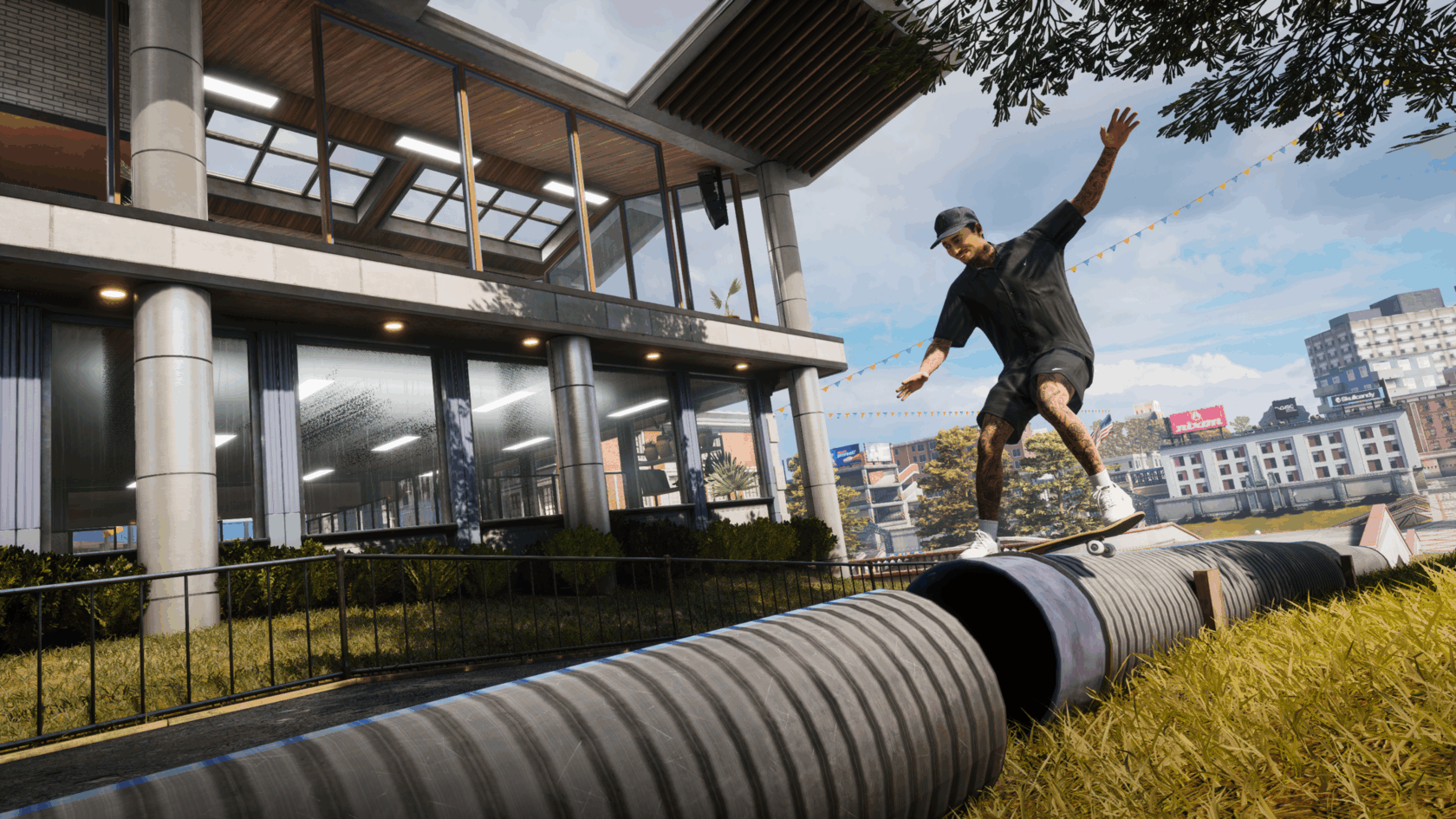

Drop in, grab a deck and get ready to grind — Tony Hawk’s Pro Skater 1 + 2 is kickflipping their way onto the cloud, joining the newly launched Tony Hawk’s Pro Skater 3 + 4. Drop back into the iconic skateboarding games with legendary pros, an era-defining soundtrack and insane trick combos.

Whether chasing high scores in the warehouse or spelling out S-K-A-T-E in school, these classics deliver all the gnarly action and style that defined a generation. Skate as legendary pros, master iconic parks and pull off wild tricks in Tony Hawk’s Pro Skater. Tony Hawk’s Pro Skater 2 ups the ante with new moves, create-a-skater mode and even more legendary levels to conquer.

With GeForce NOW, ollie into the action instantly — no installs, no updates, just pure skateboarding bliss across devices. Hit those perfect lines in crisp, high-resolution graphics, enjoy ultralow latency and keep progress rolling even on the go. It’s the ultimate way to shred, whether gamers are seasoned pros or just starting out.

Descend into chaos in Killing Floor 3, a next-generation co-op first person shooter where players are humanity’s last defense against the relentless Zed horde, bioengineered monstrosities created by a sinister megacorporation. Take on the role of a Specialist, each with unique abilities, and face ever-evolving waves of smarter, faster enemies in atmospheric, gore-drenched arenas. Customize the arsenal, master new agile movement options and unleash powerful gadgets to turn the tide of battle. Use “Zed Time” to slow the action, exploit deadly environmental traps and upgrade gear while fighting for survival to stand strong.

In addition, members can look for the following:

What are you planning to play this weekend? Let us know on X or in the comments below.

]]>

You’ve avoided that one game boss long enough. Time to face your pixelated fears.

—

NVIDIA GeForce NOW (@NVIDIAGFN) July 21, 2025

For media company Black Mixture, AI isn’t just a tool — it’s an entire pipeline to help bring creative visions to life.

Founded in 2010 by Nate and Chriselle Dwarika, Black Mixture takes an innovative approach to using AI in creative workflows. It built its visual reputation in motion design and production using traditional artistry methods and has since expanded its breadth of work to educating artists who want to start incorporating AI tools into their own projects, curating custom AI resources and sharing tutorials on their YouTube channel.

In recent years, Black Mixture has enhanced its creative workflows in apps with GPU-accelerated features. Today, the company has added new AI models and features to its toolkit, running the most complex AI tasks locally on an NVIDIA GeForce RTX 4090-equipped system.

Black Mixture uses generative AI to create or enhance video footage and image assets. This week, RTX AI Garage dives into some of the apps and workflows the company uses, as well as some AI tips and tricks.

Plus, NVIDIA Broadcast version 2.0.2 is now available, with up to a 15% performance boost for GeForce RTX 50 Series and NVIDIA RTX PRO Blackwell GPUs.

Iteration is a critical part of the generative AI content creation process, with a typical project requiring the generation of up to hundreds of images.

While models such as ChatGPT and Google Gemini can create a single image fairly quickly, artists like Nate and Chriselle Dwarika require much faster speeds and greater creative control. With on-device AI tools accelerated by RTX, that speed and control is achievable.

Generating a standard 1024×1024 image in ComfyUI takes 2-3 seconds on my RTX 4090. When you’re batch-generating hundreds of assets, that’s the difference between an hour, an entire day or more.” — Nate Dwarika

Using generative AI, the Black Mixture team can easily draw inspiration from a much wider variety of high-quality images than with manual methods, while refining outputs to their client’s preferred vision.

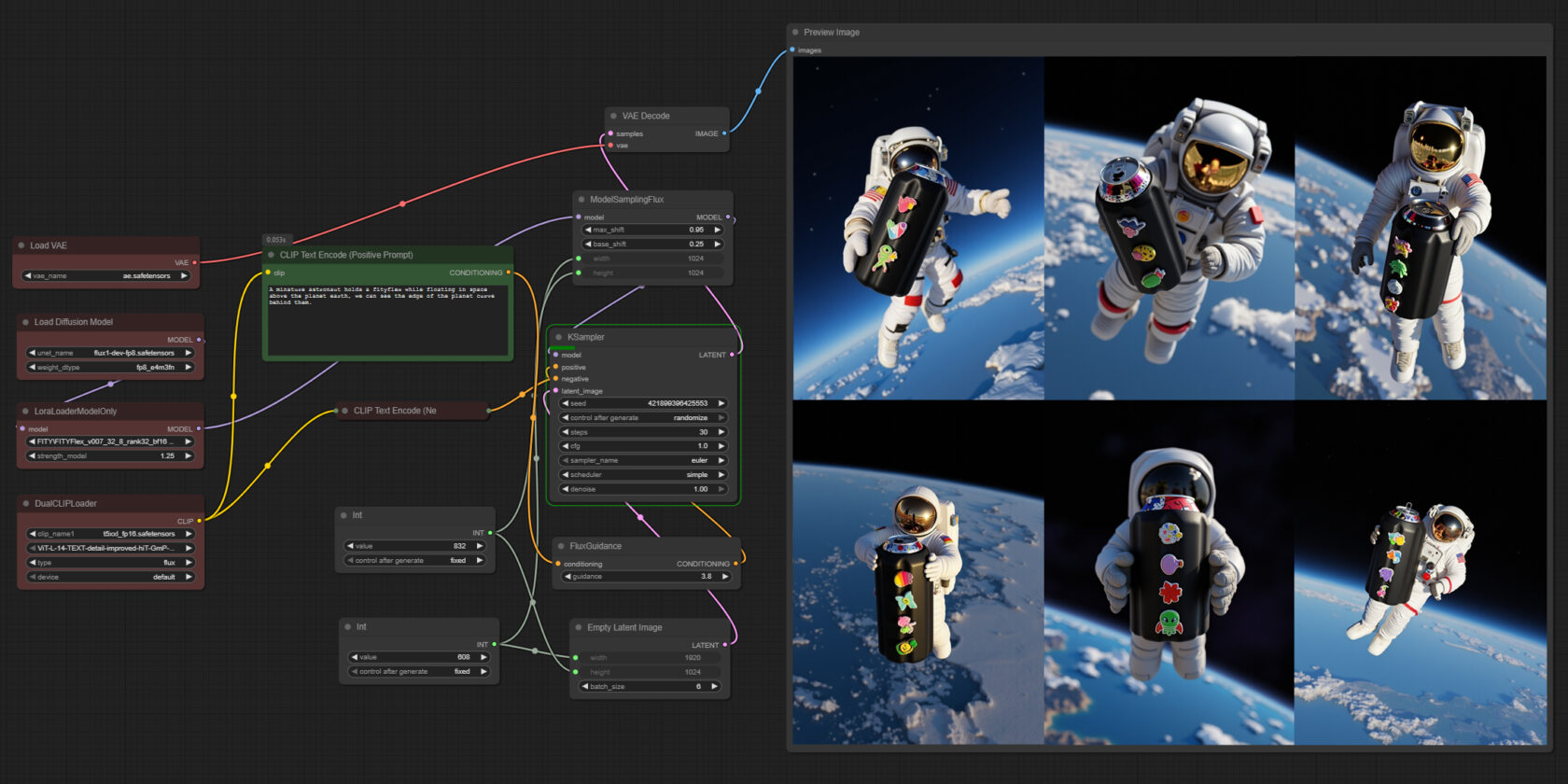

Nate Dwarika uses the node-based interface ComfyUI in his text-to-image workflow. It offers a high degree of flexibility in the arrangement of nodes, with the ability to combine different characteristics of popular generative AI models — all accelerated by his GeForce RTX GPU.

One such generative AI model is FLUX.1-dev, an image generation model from Black Forest Labs. When deployed in ComfyUI on an RTX AI PC, the model taps CUDA accelerations in PyTorch that significantly speeds an artist’s workflow. The image below, which would take about two minutes per image on a Mac M3 Ultra, takes less than 12 seconds to generate with a GeForce RTX 4090 desktop GPU.

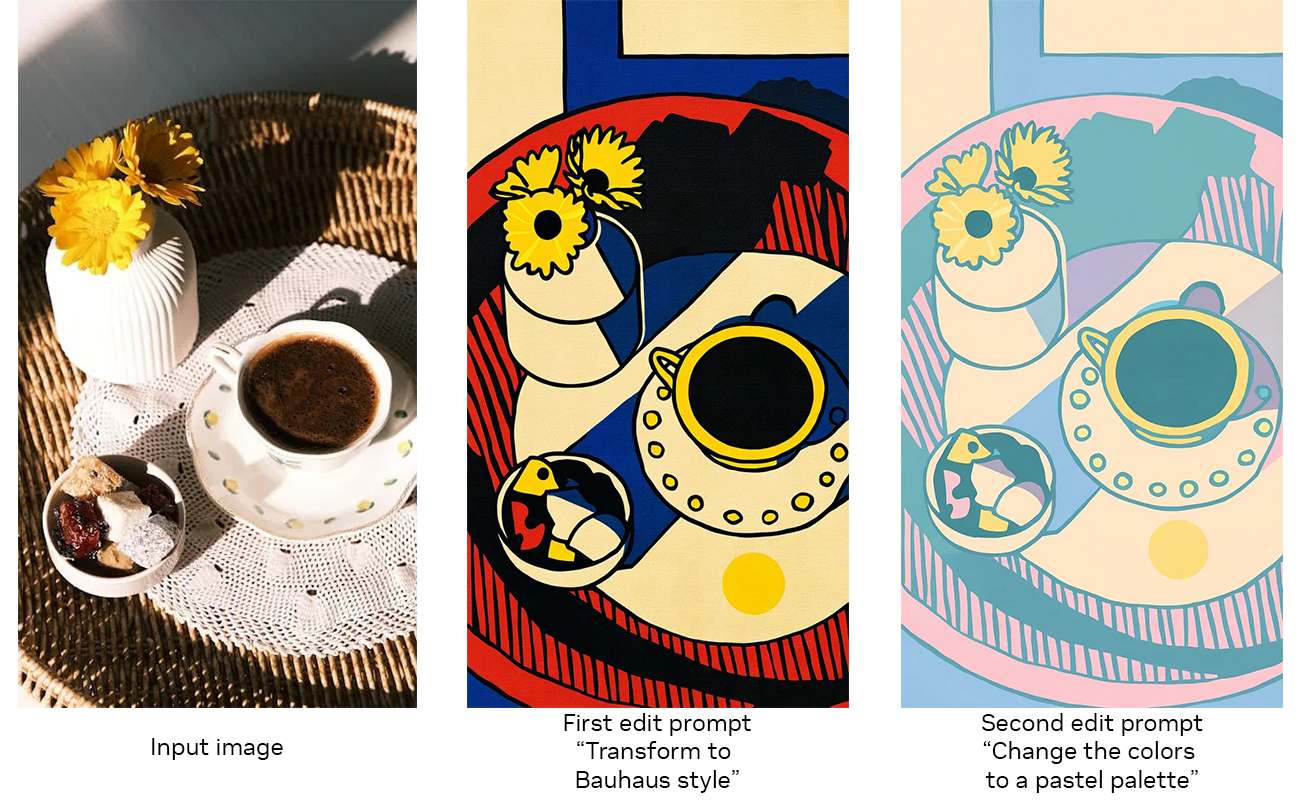

Black Forest Labs also offers FLUX.1 Kontext, a family of models that allow users to start from a reference image or text prompt and guide edits with suggestions, without fine-tuning or complex workflows with multiple ControlNets, extensions that enable more precise control over the image structure, composition and more.

Take the stunning dragon visual below. FLUX.1 Kontext enabled the artist to rapidly iterate on his initial visual, guiding the AI model with additional inputs like poses, edges and depth maps within the same prompt window.

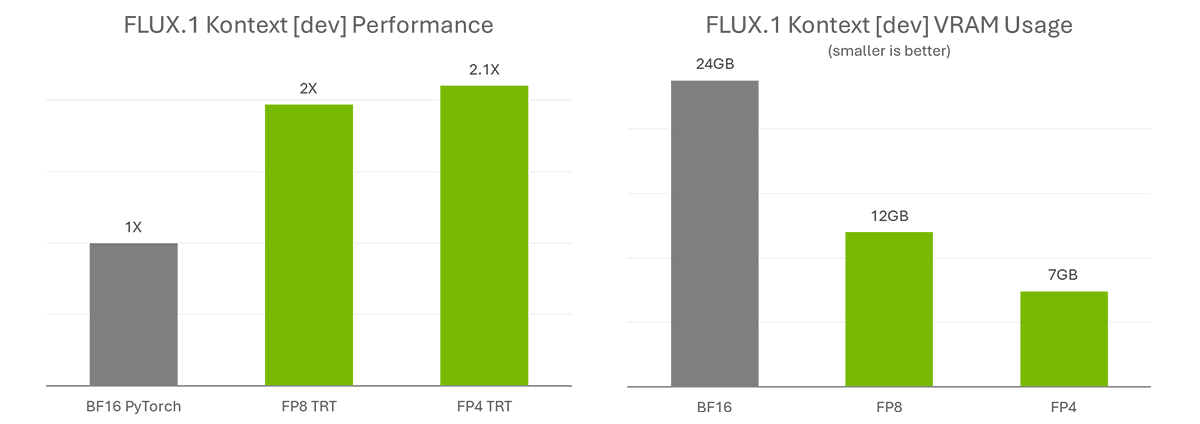

FLUX.1 Kontext is also offered in quantized variants that reduces VRAM requirements so more users can run it locally. It makes use of hardware accelerations in NVIDIA GeForce RTX Ada Generation and Blackwell GPUs.

Together with NVIDIA TensorRT optimizations, these variants provide more than double the performance. There are two levels of quantization: FP8 and FP4. FP8 is accelerated on both GeForce RTX 40 and 50 Series GPUs, while FP4 is accelerated solely on the 50 Series.

Dwarika also uses Stable Diffusion 3.5, which supports FP8 quantization for the GeForce RTX 40 Series — enabling 40% less VRAM usage and nearly doubling image generation speed.

With these visual AI tools, Black Mixture can create photorealistic, original visuals for its clients in a time- and cost-effective way.

Nate and Chriselle Dwarika also use a variety of more traditional content creation tools — all accelerated by RTX. For video content, Nate Dwarika captures footage on OBS Studio using the NVIDIA encoder (NVENC) built into his GeForce RTX 4090 GPU. Because NVENC is a separate encoder, the rest of the GPU can focus on resource-intensive ComfyUI workflows without reducing performance, all while maintaining top encoding quality.

The husband-and-wife duo completes final edits and touch-ups in Adobe Premiere Pro, which includes a wide variety of RTX-accelerated AI features like Media Intelligence, Enhance Speech and Unsharp Mask. They use GPU-accelerated encoding with NVENC to dramatically speed final file exports.

Black Mixture’s next project is an Advanced Generative AI Course to help creators get started with using AI in their workflows. It includes a series of live trainings that explore generative AI capabilities, as well as NVIDIA RTX AI technology and optimizations. Attendees can expect 6-8 weeks of live sessions, 30+ hours of self-paced lessons, guest experts, a student showcase, exclusive AI toolkits and more.

NVIDIA Broadcast version 2.0.2 delivers 15% faster performance for NVIDIA GeForce RTX 50 Series and NVIDIA RTX PRO Blackwell GPUs.

With the performance boost, AI features like Studio Voice and Virtual Key Light can now be used on GeForce RTX 5070 GPUs or higher. Studio Voice enhances the sound of a user’s microphone to match that of a high-quality microphone, while Virtual Key Light relights a subject’s face to illuminate it as if it were well-lit by two lights.

The Eye Contact feature — which uses AI to make it appear as if users are looking directly at the camera, even when glancing to the side or taking notes — is improved with increased stability for eye color. Additional enhancements include updated user interface elements for easier navigation and improved high contrast theme support.

Download NVIDIA Broadcast.

Each week, the RTX AI Garage blog series features community-driven AI innovations and content for those looking to learn more about NVIDIA NIM microservices and AI Blueprints, as well as building AI agents, creative workflows, productivity apps and more on AI PCs and workstations.

Plug in to NVIDIA AI PC on Facebook, Instagram, TikTok and X — and stay informed by subscribing to the RTX AI PC newsletter. Join NVIDIA’s Discord server to connect with community developers and AI enthusiasts for discussions on what’s possible with RTX AI.

Follow NVIDIA Workstation on LinkedIn and X.

See notice regarding software product information.

]]>In today’s fast-evolving digital landscape, marketing teams face increasing pressure to deliver personalized, brand-accurate content at scale and speed. Traditional content creation workflows are often time-consuming, costly and fragmented across multiple tools and teams.

Universal Scene Description (OpenUSD), an open and extensible 3D framework, is helping teams overcome these challenges by streamlining how marketing content is created, managed and delivered.

Global brands including Coca-Cola, Moët Hennessy, Nestlé and Unilever are harnessing innovative marketing solutions built on NVIDIA Omniverse — a platform for developing OpenUSD applications. These AI-based solutions dramatically accelerate content generation for advertising and consumer engagement:

By using the NVIDIA Omniverse Blueprint for precise visual generative AI, solution providers and software developers are enabling organizations to rapidly produce high-quality, brand-accurate, engaging visuals for local markets at scale, streamlining workflows and ensuring creative consistency across every channel.

Industry leaders are already seeing the results of tapping AI and OpenUSD for marketing workflows.

Accenture Song used OpenUSD in Omniverse to launch an AI-powered content service for Nestlé. The content service creates exact 3D virtual replicas of products for e-commerce and digital media channels, demonstrating the impact of digital twins and advanced 3D workflows.

Accenture Song, Nestlé

Accenture Song, Nestlé

SKAI Intelligence, a global provider of AI-powered content creation solutions, recently debuted the world’s first end-to-end, retail-focused AI-generated content production pipeline built entirely on NVIDIA Omniverse. The browser-based, AI-native workflow automates the entire content generation process — from product scanning and modeling to animation, lighting and rendering — and delivers up to 95% faster production speeds versus traditional methods.

Katana Studio, a real-time 3D content creation studio and developer behind the COATcreate tool, has used NVIDIA Omniverse to streamline automotive marketing for Nissan, significantly reducing asset creation timelines and costs.

INDG, a digital content automation company, developed the software-as-a-service platform Grip on NVIDIA Omniverse and OpenUSD to empower global brands like Moët Hennessy and Coca-Cola to produce high-quality, brand-consistent content across markets.

Grip, Moët Hennessy

Grip, Moët Hennessy

By centralizing OpenUSD asset libraries and creating digital twins of products, Grip enables teams to quickly assemble, adapt and deploy campaign-ready content in just minutes — rather than weeks. This approach directly addresses the challenges of slow, costly and inconsistent manual localization processes that have long hindered marketing efforts.

Grip relies on rules-based AI, NVIDIA RTX GPUs and the NVIDIA AI Enterprise software platform to ensure brand consistency across diverse markets. The Grip platform also integrates Bria’s visual generative AI models to enhance automated content production at scale. Grip’s content engine acts as a virtual art director, codifying and enforcing brand guidelines for every asset while dynamically adjusting composition, lighting and product details.

Dive deeper into Grip’s innovative approach at the company’s upcoming session at SIGGRAPH, a computer graphics conference taking place Aug. 10-14 at the Vancouver Convention Centre and online.

Unilever, in collaboration with Collective World, is using Omniverse, OpenUSD and photorealistic 3D digital twins to accelerate content production. Unilever’s new content-creation workflow, powered by real-time 3D rendering, has cut production timelines from months to days, halved costs and enabled consistent brand experiences across markets with a 5x reduction in content duplication.

Collective World, Unilever, Nexxus

Collective World, Unilever, Nexxus

Monks, a digital-first marketing and technology services company, is also using Omniverse and OpenUSD to drive hyperpersonalized and collaborative product experiences. The technologies allow Monks’ services to empower brands to virtually explore and customize product designs in real time.

Hear Monks representatives discuss how they’re building automated pipelines and agentic systems to ease deployment and scaling of AI-driven marketing operations across the enterprise:

Discover the future of 3D content creation and connect with the OpenUSD community by joining NVIDIA at SIGGRAPH. Highlights will include:

Discover why developers and 3D practitioners are using OpenUSD and learn how to optimize 3D workflows with the self-paced “Learn OpenUSD” curriculum for 3D developers and practitioners, available for free through the NVIDIA Deep Learning Institute.

Explore the Alliance for OpenUSD forum and the AOUSD website.

Stay up to date by subscribing to NVIDIA Omniverse news, joining the Omniverse community and following NVIDIA Omniverse on Instagram, LinkedIn, Medium and X.

Featured image courtesy of Grip, Moët Hennessy.

]]>Editor’s note: This post is part of the AI On blog series, which explores the latest techniques and real-world applications of agentic AI, chatbots and copilots. The series also highlights the NVIDIA software and hardware powering advanced AI agents, which form the foundation of AI query engines that gather insights and perform tasks to transform everyday experiences and reshape industries.

With advancements in agentic AI, intelligent AI systems are maturing to now facilitate autonomous decision-making across industries, including financial services.

Over the last year, customer service-related use of generative AI, including chatbots and AI assistants, has more than doubled in financial services, rising from 25% to 60%. Organizations are using AI to automate time-intensive tasks like document processing and report generation, driving significant cost savings and operational efficiency.

According to NVIDIA’s latest State of AI in Financial Services report, more than 90% of respondents reported a positive impact on their organization’s revenue from AI.

AI agents are versatile, capable of adapting to complex tasks that require strict protocols and secure data usage. They can help with an expanding list of use cases, from enabling better investment decisions by automatically identifying portfolio optimization strategies to ensuring regulatory alignment and compliance automation.

To improve market returns and business performance, AI agents are being adopted in various areas that benefit greatly from autonomous decision-making backed by data.

According to the State of AI in Financial Services report, 60% of respondents said customer experience and engagement was the top use case for generative AI. Businesses using AI have already seen customer experiences improve by 26%.

AI agents can help automate repetitive tasks while providing next steps, such as dispute resolution and know-your-customer updates. This reduces operational costs and helps minimize human errors.

By handling customer inquiries and forms, AI chatbots scale support and ensure 24/7 availability, enhancing customer satisfaction. Employees can focus on higher-level, judgment-based cases, rather than performing case intake, data analysis and documentation.

In addition, AI agents are crucial for fraud detection, as they can detect and respond to suspicious transactions automatically. The State of AI report highlighted that out of 20 use cases, cybersecurity experienced the highest growth over the last year, with more than a third of respondents now assessing or investing in AI for cybersecurity.

AI closes the time gap between detection and action, as a lack of action can result in significant financial loss.

To combat fraud, AI agents can monitor transaction patterns in real time, learn from new types of fraud and take immediate action by alerting compliance teams or freezing suspicious accounts — all without the need for human intervention. Plus, teams of AI agents can work with other systems to retrieve additional data, simulate potential fraud scenarios and investigate abnormalities.

AI agents make financial management easier, especially for bill payment and cash flow management. Because agentic AI supports machine-to-machine interactions in digital ecosystems, it can ensure regulatory compliance by automatically maintaining detailed audit trails. This reduces compliance costs and processing time, making it easier for financial institutions to operate in complex regulatory environments.

For capital markets, the most powerful investment insights are often hidden in unstructured text data from everyday document sources such as news articles, blogs and SEC filings. AI agents can accelerate intelligent document processing (IDP) to provide insight and investment recommendations for traders, enabling faster decision-making and reducing the risk of financial losses.

In consumer banking, handling documents like loan records, regulatory filings and transaction records involves a lot of complex data. This amount of data is so large that it can be difficult and time-consuming to process and understand it manually. IDP helps solve this issue, using AI to identify document types, summarize documents, employ retrieval-augmented generation for answers and support, and organize data.

The data-driven insights from multi-agent systems inform strategic business decisions as these systems continuously learn from customer and institutional data using a data flywheel.

Many industry customers and partners have benefited significantly from integrating AI into their workflows.

For example, BlackRock uses Aladdin, a proprietary platform that unifies investment management processes across public and private markets for institutional investors.

With numerous Aladdin applications and thousands of specialized users, the BlackRock team identified an opportunity to use AI to streamline the platform’s user experience while fostering connectivity and operational efficiency. Rapidly and securely, BlackRock has bolstered the Aladdin platform with advanced AI through Aladdin Copilot.

Using a federated development model, where different teams can work on AI agents independently while building on a common foundation, BlackRock’s central AI team established a standardized communication system and plug-in registry. This allows the firm’s developers and data scientists to create and deploy AI agents tailored to their specific areas, improving intelligence and efficiency for clients.

Another example is bunq’s generative AI platform, Finn, which offers users a range of features to help manage finances through an in-app chatbot. It can answer questions about money, provide insight into spending habits and offer tips on using the bunq app. Finn uses advanced AI to improve its responses based on feedback and, beyond the in-app chatbot, now handles over 90% of all users’ support tickets.

Capital One is also assisting customers with Chat Concierge, its multi-agent conversational AI assistant designed to enhance the automotive-buying experience. Consumers have 24/7 access to agents that provide real-time information and take action based on user requests. In a single conversation, Chat Concierge can perform tasks like comparing vehicles to help car buyers find their ideal choice and scheduling test drives or appointments with a sales team.

RBC’s latest platform for global research, Aiden, uses internal agents to automatically perform analysis when companies covered by RBC Capital Markets release SEC filings. Aiden has an orchestration agent working with other agents, such as the SEC filing agent, earnings agent and a real-time news agent.

The building blocks of a powerful financial services agent include:

Learn more about how financial services companies are using AI to enhance services and business operations in the full State of AI in Financial Services report.

]]>Listen up citizens, the law is back and patrolling the cloud. Nacon’s RoboCop Rogue City — Unfinished Business launches today in the cloud, bringing justice to every device, everywhere.

Log in, lock and load, and take charge with the ten games heading to the cloud this week.

Plus, Cyberpunk 2077’s 2.3 update amps up the chaos in Night City while Zenless Zone Zero’s 2.1 update delivers summer vibes — all instantly playable in the cloud.

Step into the titanium boots of RoboCop, the ultimate cybernetic law enforcer. Whether a veteran of the force or a rookie looking to protect the innocent, players will encounter a fresh case file packed with explosive action, tough choices, and that signature blend of grit and dry wit in RoboCop Rogue City — Unfinished Business.

Tackle a new storyline that puts players’ decision-making skills to the test, and wield the iconic RoboCop weapons while upholding the law.

With GeForce NOW, players won’t need a high-tech lab to experience high visual quality and lightning-fast gameplay. Stream the game instantly on mobile devices, laptops, SHIELD TV, Samsung and LG smart TVs, gaming handhelds or even that old PC that’s seen better days.

Cyberpunk 2077’s Update 2.3 is rolling out with a turbocharged dose of Night City attitude. Four new quests are dropping, each unlocking a fresh set of wheels — including the ARV-Q340 Semimaru from the “Cyberpunk Kickdown” comic and the Rayfield Caliburn “Mordred,” plucked from the personal collection of Yorinobu Arasaka himself. Getting around just got slicker, too, thanks to the new AutoDrive feature: Players can let their rides do the work while soaking in the neon skyline with cinematic camera angles. Those with eddies to burn can even call a Delamain cab to take them to their destination in style.

Almost every ride in the game is getting an upgrade through the expanded CrystalCoat and TwinTone technologies that let players customize their vehicle’s colors. Plus, Photo Mode is getting a serious glow-up. Add new characters, poses, outfits, stickers and frames to shots, and change the time of day to get the perfect lighting.

Those ready to hit the streets can stream Cyberpunk 2077’s latest update instantly on GeForce NOW and experience Night City’s new thrills from anywhere, on any device — at the highest-quality settings. No upgrades or fancy hardware needed, just pure, cutting-edge gameplay delivered straight from the cloud.

Catch Zenless Zone Zero’s 2.1 update, “The Impending Crash of Waves,” in the cloud without having to wait for downloads. The update brings a wave of summer content to the game. Explore two new regions — Sailume Bay and the Fantasy Resort — while diving into a fresh chapter of the main story featuring the Spook Shack faction. The update introduces two new S-Rank agents, Ukinami Yuzuha and Alice Thymefield, plus the S-Rank Bangboo Miss Esme, alongside summer-themed events, mini games — like surfing and fishing — new outfits and quality-of-life improvements.

In addition, members can look for the following:

What are you planning to play this weekend? Let us know on X or in the comments below.

]]>What game soundtrack do you still hear in your head—years later?

—

NVIDIA GeForce NOW (@NVIDIAGFN) July 15, 2025

Submissions for NVIDIA’s Plug and Play: Project G-Assist Plug-In Hackathon are due Sunday, July 20, at 11:59pm PT. RTX AI Garage offers all the tools and resources to help.

The hackathon invites the community to expand the capabilities of Project G-Assist, an experimental AI assistant available through the NVIDIA App that helps users control and optimize NVIDIA GeForce RTX systems.

Entrants gain the chance to win a GeForce RTX 5090 laptop, or a limited NVIDIA GeForce RTX 5080 or RTX 5070 Founders Edition graphics card, plus NVIDIA Deep Learning Institute credits. Finalists may also be featured on NVIDIA’s social media channels.

Register for the hackathon and check out the curated technical resources below to bring these submissions to life.

When in the heat of a gaming moment or the flow of a creative project, interrupting one’s focus to navigate complex PC settings menus is a common frustration. For example, manually tweaking GPU performance or optimizing system parameters often requires leaving the user’s current application, which breaks concentration.

Enter Project G-Assist, which allows users to control their RTX GPU and other system settings using natural language. It’s powered by a small language model that runs on device and can be accessed directly from the NVIDIA overlay within the NVIDIA App — no need to tab out or switch programs.

Users can also expand its capabilities via plug-ins and even connect it to agentic frameworks such as Langflow. G-Assist plug-ins can be built in several ways, including with Python for rapid development, with C++ for performance-critical apps and with custom system interactions for hardware and operating system automation.

Project G-Assist requires a GeForce RTX 50, 40 or 30 Series Desktop GPU with at least 12GB of VRAM, a Windows 11 or 10 operating system, a compatible CPU (Intel Pentium G Series, Core i3, i5, i7 or higher; AMD FX, Ryzen 3, 5, 7, 9, Threadripper or higher), specific disk space requirements and a recent GeForce Game Ready Driver or NVIDIA Studio Driver.

As the hackathon’s submission deadline approaches this weekend, RTX AI Garage is providing resources that can help:

Sydney Altobell, a senior software engineer at NVIDIA, offers tips and tricks for working with G-Assist plug-ins in this on-demand webinar. The presentation and Q&A are available on the NVIDIA Developer YouTube channel and embedded below.

Fellow community developers are collaborating and sharing notes in the NVIDIA Developer Discord server. Altobell and the G-Assist engineering team have already answered many questions about plug-in submissions — keep the questions coming.

Plus, explore NVIDIA’s GitHub repository, which provides everything needed to get started developing with G-Assist, including step-by-step instructions and documentation for building custom functionalities. Take inspiration from sample plug-ins, which include code for using G-Assist to integrate into Discord, IFTTT, Google Gemini and more.

Learn more about the ChatGPT Plug-In Builder to transform ideas into functional G-Assist plug-ins with minimal coding. The tool uses OpenAI’s custom GPT builder to generate plug-in code and streamline the development process.

NVIDIA’s technical blog walks through the architecture of a G-Assist plug-in, using a Twitch integration as an example. Discover how plug-ins work, how they communicate with G-Assist and how to build them from scratch.

Find submission details and requirements on the Hackathon entry page.

Each week, the RTX AI Garage blog series features community-driven AI innovations and content for those looking to learn more about NVIDIA NIM microservices and AI Blueprints, as well as building AI agents, creative workflows, productivity apps and more on AI PCs and workstations.

Plug in to NVIDIA AI PC on Facebook, Instagram, TikTok and X — and stay informed by subscribing to the RTX AI PC newsletter.

Follow NVIDIA Workstation on LinkedIn and X.

See notice regarding software product information.

]]>This month, NVIDIA founder and CEO Jensen Huang promoted AI in both Washington, D.C. and Beijing — emphasizing the benefits that AI will bring to business and society worldwide.

In the U.S. capital, Huang met with President Trump and U.S. policymakers, reaffirming NVIDIA’s support for the Administration’s effort to create jobs, strengthen domestic AI infrastructure and onshore manufacturing, and ensure that America leads in AI worldwide.

In Beijing, Huang met with government and industry officials to discuss how AI will raise productivity and expand opportunity. The discussions underscored how researchers worldwide can advance safe and secure AI for the benefit of all.

Huang also provided an update to customers, noting that NVIDIA is filing applications to sell the NVIDIA H20 GPU again. The U.S. government has assured NVIDIA that licenses will be granted, and NVIDIA hopes to start deliveries soon. Finally, Huang announced a new, fully compliant NVIDIA RTX PRO GPU that “is ideal for digital twin AI for smart factories and logistics.”

As Huang noted during his visits, the world has reached an inflection point — AI has become a fundamental resource, like energy, water and the internet. Jensen emphasized NVIDIA’s commitment to support open-source research, foundation models and applications, which democratize AI and will empower emerging economies in every region, including Latin America, Europe, Asia and beyond.

“General-purpose, open-source research and foundation models are the backbone of AI innovation,” Huang explained to reporters in D.C. “We believe that every civil model should run best on the U.S. technology stack, encouraging nations worldwide to choose America.”

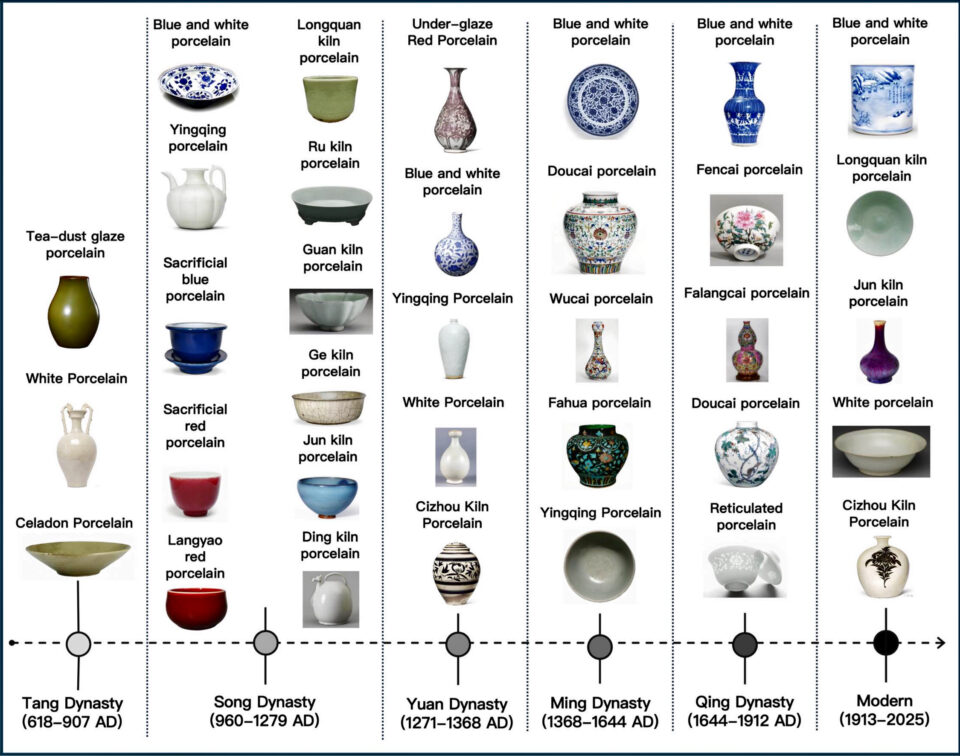

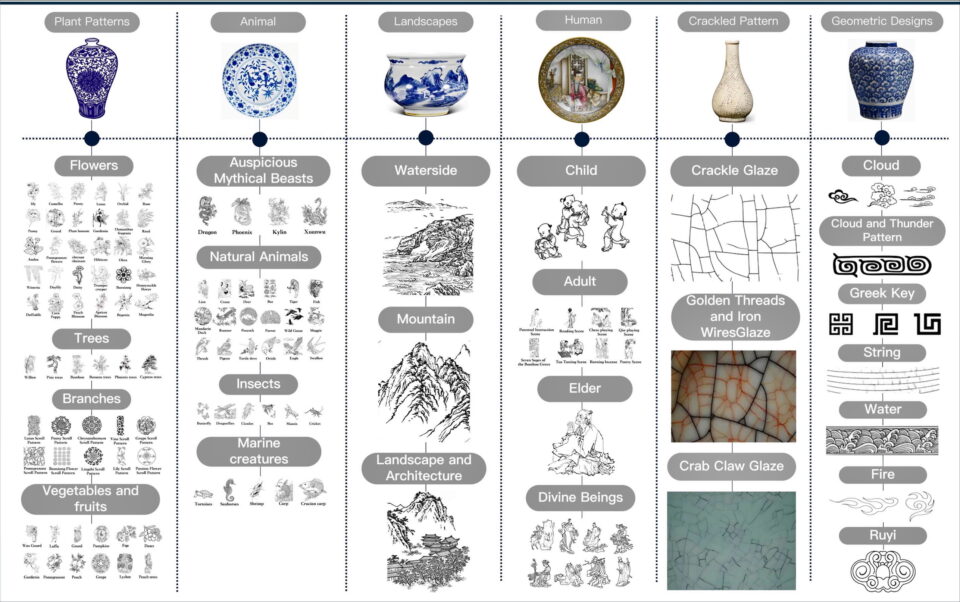

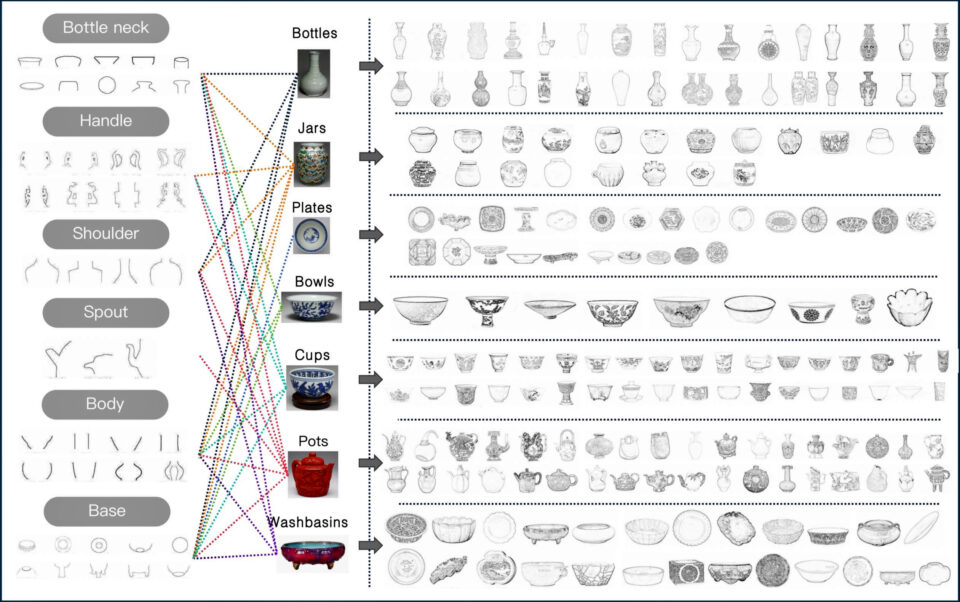

]]>Ceramics — the humble mix of earth, fire and artistry — have been part of a global conversation for millennia.

From Tang Dynasty trade routes to Renaissance palaces, from museum vitrines to high-stakes auction floors, they’ve carried culture across borders, evolving into status symbols, commodities and pieces of contested history. Their value has been shaped by aesthetics and economics, empire and, now, technology.

In a lab at University Putra Malaysia, that legacy meets silicon. Researchers there, alongside colleagues at UNSW Sydney, have built an AI system that can classify Chinese ceramics and predict their value with uncanny precision. The tool uses deep learning to analyze decorative motifs, shapes and kiln-specific craftsmanship. It predicts price categories based on real auction data from institutions like Sotheby’s and Christie’s, achieving test accuracy as high as 99%.

It’s all powered by an NVIDIA GeForce RTX 3090, a consumer-grade GPU beloved by gamers, explains Siqi Wu, one of the researchers behind the project. Not a data center, not specialized industrial hardware, just the same chip pushing frame rates for gamers enjoying Cyberpunk 2077 and Alan Wake 2 across the world.

The motivation is as old as the trade routes those ceramics once traveled: access, but in this case, access to expertise rather than material goods.

“Artifact pricing and dating still heavily rely on expert judgment,” Wu said. That expertise remains elusive for younger collectors, smaller institutions and digital archive projects. Wu’s team aims to change that by making cultural appraisal more objective, scalable and accessible to a wider audience.

It doesn’t stop at classification. The system pairs its YOLOv11-based detection model with an algorithm that learned market value directly from years of real-world auction results. In one test, the AI assessed a Ming Dynasty artifact at roughly 30% below its final hammer price. It’s a reminder that even in an industry steeped in tradition, algorithms can offer new perspectives.

Those perspectives don’t just quantify heritage, they extend the conversation. The team is already exploring AI for other forms of cultural visual heritage, from Cantonese opera costumes to historical murals.

For now, a graphics card built for gaming is parsing centuries of craftsmanship and entering one of the world’s oldest and most global debates: what makes something valuable?

]]>As one of the world’s largest emerging markets, Indonesia is making strides toward its “Golden 2045 Vision” — an initiative tapping digital technologies and bringing together government, enterprises, startups and higher education to enhance productivity, efficiency and innovation across industries.

Building out the nation’s AI infrastructure is a crucial part of this plan.

That’s why Indonesian telecommunications leader Indosat Ooredoo Hutchison, aka Indosat or IOH, has partnered with Cisco and NVIDIA to support the establishment of Indonesia’s AI Center of Excellence (CoE). Led by the Ministry of Communications and Digital Affairs, called Komdigi, the CoE aims to advance secure technologies, cultivate local talent and foster innovation through collaboration with startups.

Indosat Ooredoo Hutchison President Director and CEO Vikram Sinha, Cisco Chair and CEO Chuck Robbins and NVIDIA Senior Vice President of Telecom Ronnie Vasishta today detailed the purpose and potential of the CoE during a fireside chat at Indonesia AI Day, a conference focused on how artificial intelligence can fuel the nation’s digital independence and economic growth.

As part of the CoE, a new NVIDIA AI Technology Center will offer research support, NVIDIA Inception program benefits for eligible startups, and NVIDIA Deep Learning Institute training and certification to upskill local talent.

“With the support of global partners, we’re accelerating Indonesia’s path to economic growth by ensuring Indonesians are not just users of AI, but creators and innovators,” Sinha added.

“The AI era demands fundamental architectural shifts and a workforce with digital skills to thrive,” Robbins said. “Together with Indosat, NVIDIA and Komdigi, Cisco will securely power the AI Center of Excellence — enabling innovation and skills development, and accelerating Indonesia’s growth.”

“Democratizing AI is more important than ever,” Vasishta added. “Through the new NVIDIA AI Technology Center, we’re helping Indonesia build a sustainable AI ecosystem that can serve as a model for nations looking to harness AI for innovation and economic growth.”

The Indonesia AI CoE will comprise an AI factory that features full-stack NVIDIA AI infrastructure — including NVIDIA Blackwell GPUs, NVIDIA Cloud Partner reference architectures and NVIDIA AI Enterprise software — as well as an intelligent security system powered by Cisco.

Called the Sovereign Security Operations Center Cloud Platform, the Cisco-powered system combines AI-based threat detection, localized data control and managed security services for the AI factory.

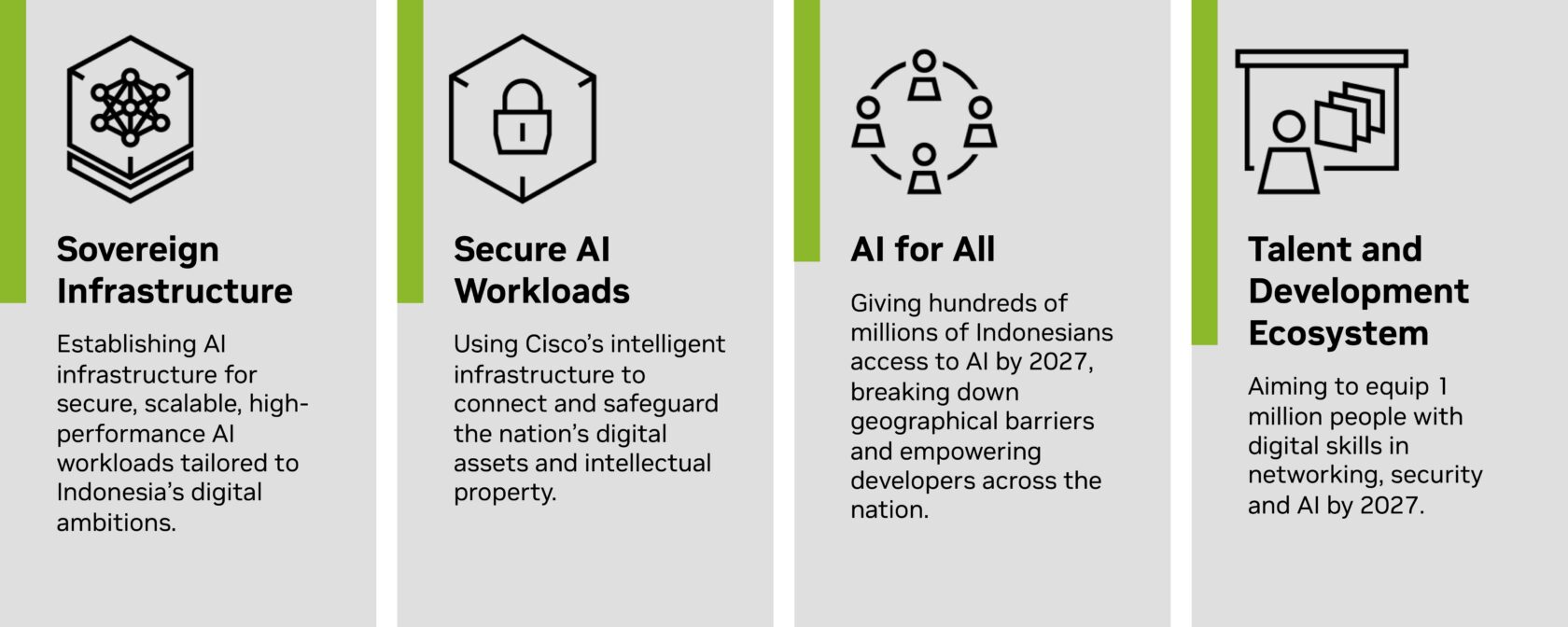

Building on the sovereign AI initiatives Indonesia’s technology leaders announced with NVIDIA last year, the CoE will bolster the nation’s AI strategy through four core pillars:

Some 28 independent software vendors and startups are already using IOH’s NVIDIA-powered AI infrastructure to develop cutting-edge technologies that can speed and ease workflows across higher education and research, food security, bureaucratic reform, smart cities and mobility, and healthcare.

With Indosat’s coverage across the archipelago, the company can reach hundreds of millions of Bahasa Indonesian speakers with its large language model (LLM)-powered applications.

For example, using Indosat’s Sahabat-AI collection of Bahasa Indonesian LLMs, the Indonesia government and Hippocratic AI are collaborating to develop an AI agent system that provides preventative outreach capabilities, such as helping women subscribers over the age of 50 schedule a mammogram. This can help prevent or combat breast cancer and other health complications across the population.

Separately, Sahabat-AI also enables Indosat’s AI chatbot to answer queries in the Indonesian language for various citizen and resident services. A person could ask about processes for updating their national identification card, as well as about tax rates, payment procedures, deductions and more.

In addition, a government-led forum is developing trustworthy AI frameworks tailored to Indonesian values for the safe, responsible development of artificial intelligence and related policies.

Looking forward, Indosat and NVIDIA plan to deploy AI-RAN technologies that can reach even broader audiences using AI over wireless networks.

Learn more about NVIDIA-powered AI infrastructure for telcos.

]]>Grab a friend and climb toward the clouds — PEAK is now available on GeForce NOW, enabling members to try the hugely popular indie hit on virtually any device.

It’s one of four new games joining the cloud this week. Plus, members can look forward to Tony Hawk’s Pro Skater 3 + 4 coming soon.

PEAK is a co-op climbing game that puts players in the shoes of lost nature scouts, ascending a mountain at the center of a mysterious island. Scavenge for food (even if it’s of questionable quality), manage injuries on the climb and help the squad summit safely. Members can play solo or survive together with up to four players.

There’s a new island to survive on every day. And, with more than 100,000 concurrent players daily on Steam, there’s always a climbing buddy to join.

GeForce NOW members are equipped for the challenge with an Ultimate membership, which powers the climb at up to 4K resolution and 120 frames per second. Ultimate members can play PEAK and more than 2,000 other games they already own with extended session lengths and ultralow latency on a GeForce RTX 4080 rig. Upgrade today for elevated gameplay.

Tony Hawk’s Pro Skater 3 + 4 is coming soon to GeForce NOW.

The legendary franchise series that taught generations to ollie, grind and combo like maniacs is back, helping members hit the 900 from nearly any device.

The Birdman and crew return, bringing all the classic parks, legendary skaters and iconic soundtrack gamers remember — now fully remade with a few wild surprises for players.

Relive the glory days, whether grinding rails in the airport or pulling off insane combos in Los Angeles. New environments like a water park add a creative twist. The roster is stacked with original legends and new faces — plus a few unexpected guests.

Career Mode delivers with heart-pounding runs, while New Game+ and Solo Tours keep the challenge alive. Take on friends and rivals in online multiplayer mode. The upgraded Create-a-Park and Create-a-Skater tools mean gamers can build, style and shred their way.

With GeForce NOW, gamers will soon be able to skate anywhere, anytime — no console required. Enjoy the title in stunning 4K resolution and ultrasmooth frame rates with an Ultimate membership powered by GeForce RTX 4080 servers. Drop in instantly on any device, chase high scores with the lowest latency and keep the shred alive. The ultimate skate session is always just a click away.

Catch Every Day We Fight, a new roguelite, turn-based tactic game from Singla Space Lab and Hooded Horse, in the cloud with GeForce NOW.

In this title, citizens from either side of an ongoing war must set aside their differences as a mysterious alien invasion threatens humanity — and time has come to a stop for all but a small band of freedom fighters. Caught in a seemingly endless loop, players must shape these ordinary civilians into heroes as they repeatedly fight and die. Real-time exploration, stealth and teamwork are essential to acquire new skills, seek out more powerful weapons, escape the time loop and save the world.

Members can look for the following games to stream:

What are you planning to play this weekend? Let us know on X or in the comments below.

]]>

Which game demands the fastest reflexes you’ve got?

—

NVIDIA GeForce NOW (@NVIDIAGFN) July 9, 2025

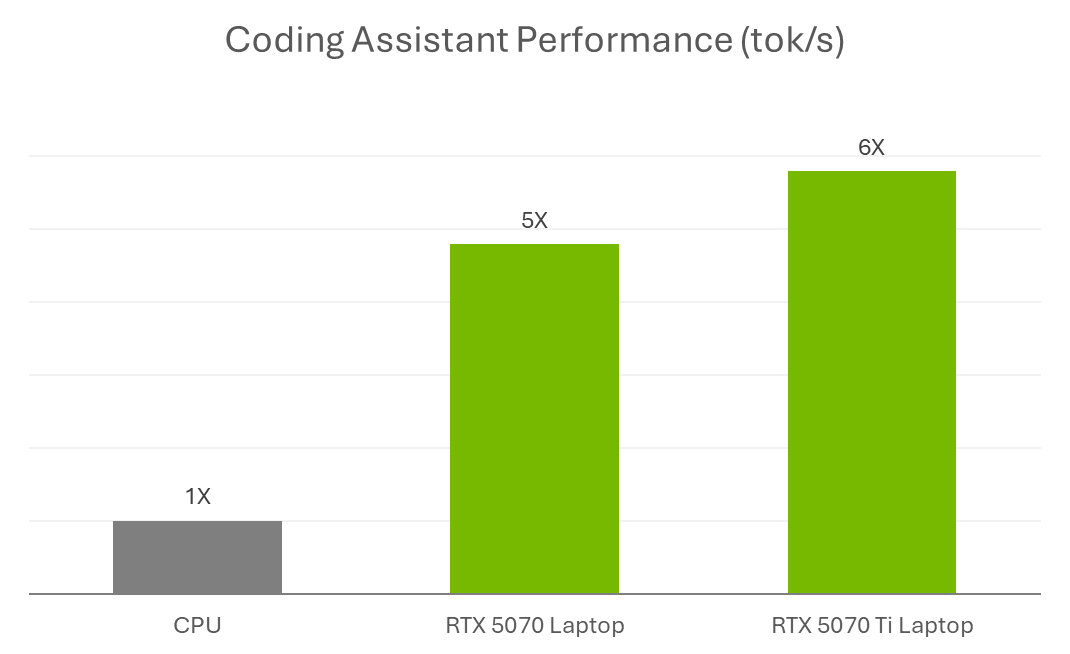

Coding assistants or copilots — AI-powered assistants that can suggest, explain and debug code — are fundamentally changing how software is developed for both experienced and novice developers.

Experienced developers use these assistants to stay focused on complex coding tasks, reduce repetitive work and explore new ideas more quickly. Newer coders — like students and AI hobbyists — benefit from coding assistants that accelerate learning by describing different implementation approaches or explaining what a piece of code is doing and why.

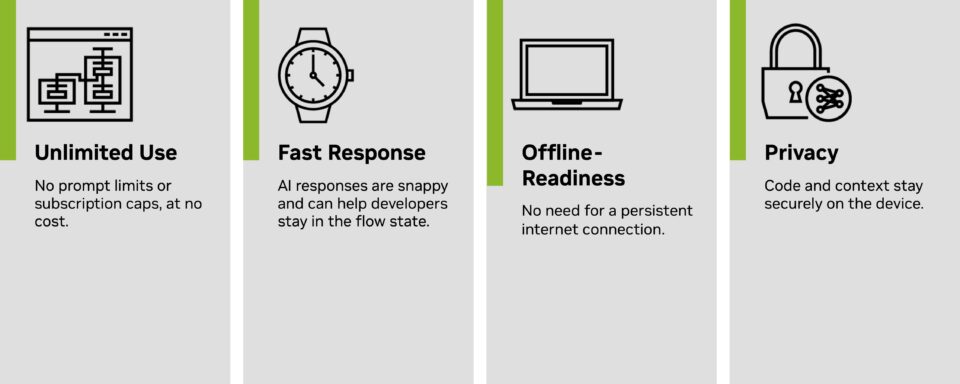

Coding assistants can run in cloud environments or locally. Cloud-based coding assistants can be run anywhere but offer some limitations and require a subscription. Local coding assistants remove these issues but require performant hardware to operate well.

NVIDIA GeForce RTX GPUs provide the necessary hardware acceleration to run local assistants effectively.

Traditional software development includes many mundane tasks such as reviewing documentation, researching examples, setting up boilerplate code, authoring code with appropriate syntax, tracing down bugs and documenting functions. These are essential tasks that can take time away from problem solving and software design. Coding assistants help streamline such steps.

Many AI assistants are linked with popular integrated development environments (IDEs) like Microsoft Visual Studio Code or JetBrains’ Pycharm, which embed AI support directly into existing workflows.

There are two ways to run coding assistants: in the cloud or locally.

Cloud-based coding assistants require source code to be sent to external servers before responses are returned. This approach can be laggy and impose usage limits. Some developers prefer to keep their code local, especially when working with sensitive or proprietary projects. Plus, many cloud-based assistants require a paid subscription to unlock full functionality, which can be a barrier for students, hobbyists and teams that need to manage costs.

Coding assistants run in a local environment, enabling cost-free access with:

Tools that make it easy to run coding assistants locally include:

These tools support models served through frameworks like Ollama or llama.cpp, and many are now optimized for GeForce RTX and NVIDIA RTX PRO GPUs.

Running on a GeForce RTX-powered PC, Continue.dev paired with the Gemma 12B Code LLM helps explain existing code, explore search algorithms and debug issues — all entirely on device. Acting like a virtual teaching assistant, the assistant provides plain-language guidance, context-aware explanations, inline comments and suggested code improvements tailored to the user’s project.

This workflow highlights the advantage of local acceleration: the assistant is always available, responds instantly and provides personalized support, all while keeping the code private on device and making the learning experience immersive.

That level of responsiveness comes down to GPU acceleration. Models like Gemma 12B are compute-heavy, especially when they’re processing long prompts or working across multiple files. Running them locally without a GPU can feel sluggish — even for simple tasks. With RTX GPUs, Tensor Cores accelerate inference directly on the device, so the assistant is fast, responsive and able to keep up with an active development workflow.

Whether used for academic work, coding bootcamps or personal projects, RTX AI PCs are enabling developers to build, learn and iterate faster with AI-powered tools.

For those just getting started — especially students building their skills or experimenting with generative AI — NVIDIA GeForce RTX 50 Series laptops feature specialized AI technologies that accelerate top applications for learning, creating and gaming, all on a single system. Explore RTX laptops ideal for back-to-school season.

And to encourage AI enthusiasts and developers to experiment with local AI and extend the capabilities of their RTX PCs, NVIDIA is hosting a Plug and Play: Project G-Assist Plug-In Hackathon — running virtually through Wednesday, July 16. Participants can create custom plug-ins for Project G-Assist, an experimental AI assistant designed to respond to natural language and extend across creative and development tools. It’s a chance to win prizes and showcase what’s possible with RTX AI PCs.

Join NVIDIA’s Discord server to connect with community developers and AI enthusiasts for discussions on what’s possible with RTX AI.

Each week, the RTX AI Garage blog series features community-driven AI innovations and content for those looking to learn more about NVIDIA NIM microservices and AI Blueprints, as well as building AI agents, creative workflows, digital humans, productivity apps and more on AI PCs and workstations.

Plug in to NVIDIA AI PC on Facebook, Instagram, TikTok and X — and stay informed by subscribing to the RTX AI PC newsletter.

Follow NVIDIA Workstation on LinkedIn and X.

See notice regarding software product information.

]]>The forecast this month is showing a 100% chance of epic gaming. Catch the scorching lineup of 20 titles coming to the cloud, which gamers can play whether indoors or on the go.

Six new games are landing on GeForce NOW this week, including launch day titles Figment and Little Nightmares II.

And to make the summer even hotter, the GeForce NOW Summer Sale is in full swing. It’s the last chance to upgrade to a six-month Performance membership for just $29.99 and stream top titles like the recently released classic Borderlands series, DOOM: The Dark Ages, FBC: Firebreak, and more with GeForce RTX power.

In Figment, a whimsical action-adventure game set in the human mind, players guide Dusty — the grumpy, retired voice of courage — and his upbeat companion Piper on a surreal journey to restore lost bravery after a traumatic event. Blending hand-drawn visuals, clever puzzles and musical boss battles, Figment explores themes of fear, grief and emotional healing in a colorful, dreamlike world filled with humor and song.

In addition, members can look for the following games to stream this week:

Here’s what’s coming in the rest of July:

In addition to the 25 games announced last month, 11 more joined the GeForce NOW library:

What are you planning to play this weekend? Let us know on X or in the comments below.

]]>

New month, new energy.

What are your cloud gaming goals for July?

—

NVIDIA GeForce NOW (@NVIDIAGFN) July 2, 2025

Black Forest Labs, one of the world’s leading AI research labs, just changed the game for image generation.

The lab’s FLUX.1 image models have earned global attention for delivering high-quality visuals with exceptional prompt adherence. Now, with its new FLUX.1 Kontext model, the lab is fundamentally changing how users can guide and refine the image generation process.

To get their desired results, AI artists today often use a combination of models and ControlNets — AI models that help guide the outputs of an image generator. This commonly involves combining multiple ControlNets or using advanced techniques like the one used in the NVIDIA AI Blueprint for 3D-guided image generation, where a draft 3D scene is used to determine the composition of an image.

The new FLUX.1 Kontext model simplifies this by providing a single model that can perform both image generation and editing, using natural language.

NVIDIA has collaborated with Black Forest Labs to optimize FLUX.1 Kontext [dev] for NVIDIA RTX GPUs using the NVIDIA TensorRT software development kit and quantization to deliver faster inference with lower VRAM requirements.

For creators and developers alike, TensorRT optimizations mean faster edits, smoother iteration and more control — right from their RTX-powered machines.

Black Forest Labs in May introduced the FLUX.1 Kontext family of image models which accept both text and image prompts.

These models allow users to start from a reference image and guide edits with simple language, without the need for fine-tuning or complex workflows with multiple ControlNets.

FLUX.1 Kontext is an open-weight generative model built for image editing using a guided, step-by-step generation process that makes it easier to control how an image evolves, whether refining small details or transforming an entire scene. Because the model accepts both text and image inputs, users can easily reference a visual concept and guide how it evolves in a natural and intuitive way. This enables coherent, high-quality image edits that stay true to the original concept.

FLUX.1 Kontext’s key capabilities include:

Black Forest Labs last week released FLUX.1 Kontext weights for download in Hugging Face, as well as the corresponding TensorRT-accelerated variants.

The [dev] model emphasizes flexibility and control. It supports capabilities like character consistency, style preservation and localized image adjustments, with integrated ControlNet functionality for structured visual prompting.

FLUX.1 Kontext [dev] is already available in ComfyUI and the Black Forest Labs Playground, with an NVIDIA NIM microservice version expected to release in August.

FLUX.1 Kontext [dev] accelerates creativity by simplifying complex workflows. To further streamline the work and broaden accessibility, NVIDIA and Black Forest Labs collaborated to quantize the model — reducing the VRAM requirements so more people can run it locally — and optimized it with TensorRT to double its performance.

The quantization step enables the model size to be reduced from 24GB to 12GB for FP8 (Ada) and 7GB for FP4 (Blackwell). The FP8 checkpoint is optimized for GeForce RTX 40 Series GPUs, which have FP8 accelerators in their Tensor Cores. The FP4 checkpoint is optimized for GeForce RTX 50 Series GPUs for the same reason and uses a new method called SVDQuant, which preserves high image quality while reducing model size.

TensorRT — a framework to access the Tensor Cores in NVIDIA RTX GPUs for maximum performance — provides over 2x acceleration compared with running the original BF16 model with PyTorch.

FLUX.1 Kontext [dev] is available on Hugging Face (Torch and TensorRT).

AI enthusiasts interested in testing these models can download the Torch variants and use them in ComfyUI. Black Forest Labs has also made available an online playground for testing the model.

For advanced users and developers, NVIDIA is working on sample code for easy integration of TensorRT pipelines into workflows. Check out the DemoDiffusion repository to come later this month.

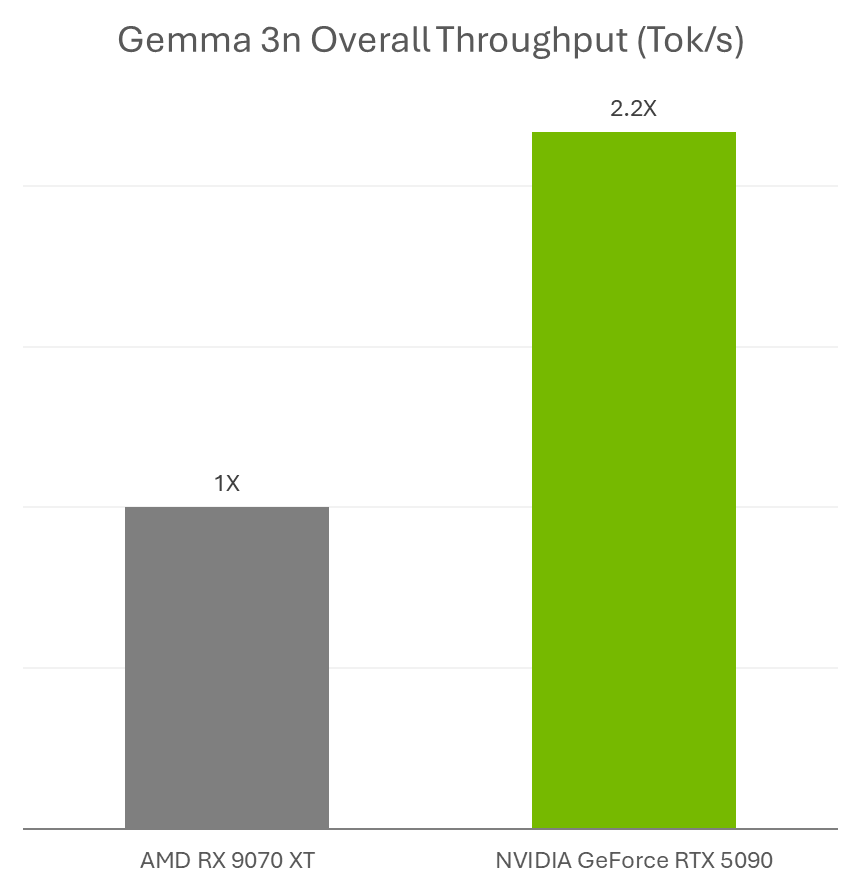

Google last week announced the release of Gemma 3n, a new multimodal small language model ideal for running on NVIDIA GeForce RTX GPUs and the NVIDIA Jetson platform for edge AI and robotics.

AI enthusiasts can use Gemma 3n models with RTX accelerations in Ollama and Llama.cpp with their favorite apps, such as AnythingLLM and LM Studio.

Plus, developers can easily deploy Gemma 3n models using Ollama and benefit from RTX accelerations. Learn more about how to run Gemma 3n on Jetson and RTX.

In addition, NVIDIA’s Plug and Play: Project G-Assist Plug-In Hackathon — running virtually through Wednesday, July 16 — invites developers to explore AI and build custom G-Assist plug-ins for a chance to win prizes. Save the date for the G-Assist Plug-In webinar on Wednesday, July 9, from 10-11 a.m. PT, to learn more about Project G-Assist capabilities and fundamentals, and to participate in a live Q&A session.

Join NVIDIA’s Discord server to connect with community developers and AI enthusiasts for discussions on what’s possible with RTX AI.

Each week, the RTX AI Garage blog series features community-driven AI innovations and content for those looking to learn more about NVIDIA NIM microservices and AI Blueprints, as well as building AI agents, creative workflows, digital humans, productivity apps and more on AI PCs and workstations.

Plug in to NVIDIA AI PC on Facebook, Instagram, TikTok and X — and stay informed by subscribing to the RTX AI PC newsletter.

Follow NVIDIA Workstation on LinkedIn and X.

See notice regarding software product information.

]]>In many parts of the world, including major technology hubs in the U.S., there’s a yearslong wait for AI factories to come online, pending the buildout of new energy infrastructure to power them.

Emerald AI, a startup based in Washington, D.C., is developing an AI solution that could enable the next generation of data centers to come online sooner by tapping existing energy resources in a more flexible and strategic way.

“Traditionally, the power grid has treated data centers as inflexible — energy system operators assume that a 500-megawatt AI factory will always require access to that full amount of power,” said Varun Sivaram, founder and CEO of Emerald AI. “But in moments of need, when demands on the grid peak and supply is short, the workloads that drive AI factory energy use can now be flexible.”

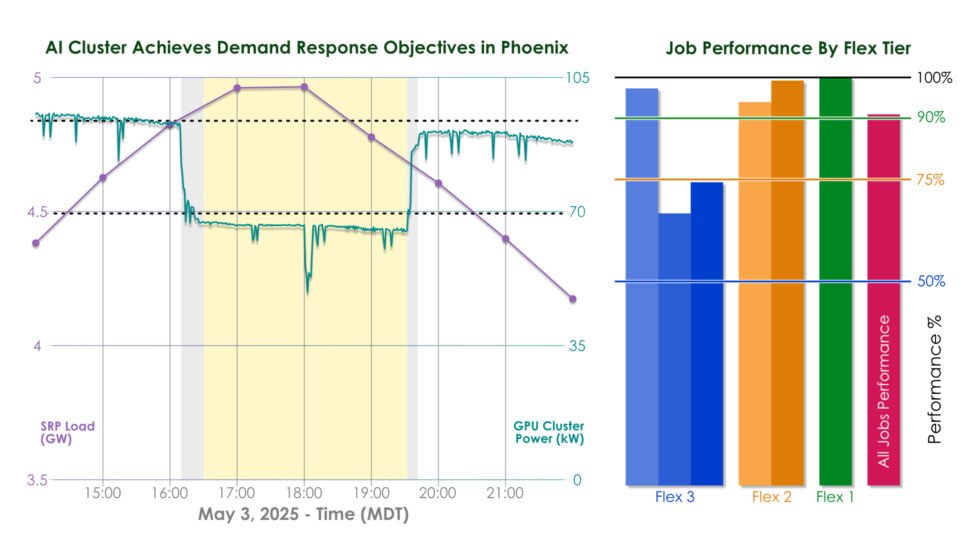

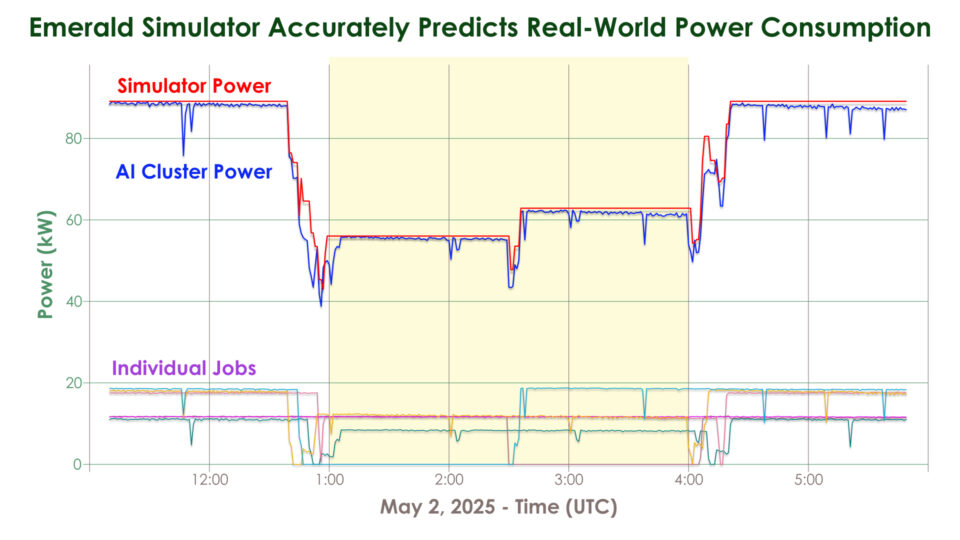

That flexibility is enabled by the startup’s Emerald Conductor platform, an AI-powered system that acts as a smart mediator between the grid and a data center. In a recent field test in Phoenix, Arizona, the company and its partners demonstrated that its software can reduce the power consumption of AI workloads running on a cluster of 256 NVIDIA GPUs by 25% over three hours during a grid stress event while preserving compute service quality.

Emerald AI achieved this by orchestrating the host of different workloads that AI factories run. Some jobs can be paused or slowed, like the training or fine-tuning of a large language model for academic research. Others, like inference queries for an AI service used by thousands or even millions of people, can’t be rescheduled, but could be redirected to another data center where the local power grid is less stressed.

Emerald Conductor coordinates these AI workloads across a network of data centers to meet power grid demands, ensuring full performance of time-sensitive workloads while dynamically reducing the throughput of flexible workloads within acceptable limits.

Beyond helping AI factories come online using existing power systems, this ability to modulate power usage could help cities avoid rolling blackouts, protect communities from rising utility rates and make it easier for the grid to integrate clean energy.

“Renewable energy, which is intermittent and variable, is easier to add to a grid if that grid has lots of shock absorbers that can shift with changes in power supply,” said Ayse Coskun, Emerald AI’s chief scientist and a professor at Boston University. “Data centers can become some of those shock absorbers.”

A member of the NVIDIA Inception program for startups and an NVentures portfolio company, Emerald AI today announced more than $24 million in seed funding. Its Phoenix demonstration, part of EPRI’s DCFlex data center flexibility initiative, was executed in collaboration with NVIDIA, Oracle Cloud Infrastructure (OCI) and the regional power utility Salt River Project (SRP).

“The Phoenix technology trial validates the vast potential of an essential element in data center flexibility,” said Anuja Ratnayake, who leads EPRI’s DCFlex Consortium.

EPRI is also leading the Open Power AI Consortium, a group of energy companies, researchers and technology companies — including NVIDIA — working on AI applications for the energy sector.

Electric grid capacity is typically underused except during peak events like hot summer days or cold winter storms, when there’s a high power demand for cooling and heating. That means, in many cases, there’s room on the existing grid for new data centers, as long as they can temporarily dial down energy usage during periods of peak demand.

A recent Duke University study estimates that if new AI data centers could flex their electricity consumption by just 25% for two hours at a time, less than 200 hours a year, they could unlock 100 gigawatts of new capacity to connect data centers — equivalent to over $2 trillion in data center investment.

Emerald AI’s recent trial was conducted in the Oracle Cloud Phoenix Region on NVIDIA GPUs spread across a multi-rack cluster managed through Databricks MosaicML.

“Rapid delivery of high-performance compute to AI customers is critical but is constrained by grid power availability,” said Pradeep Vincent, chief technical architect and senior vice president of Oracle Cloud Infrastructure, which supplied cluster power telemetry for the trial. “Compute infrastructure that is responsive to real-time grid conditions while meeting the performance demands unlocks a new model for scaling AI — faster, greener and more grid-aware.”

Jonathan Frankle, chief AI scientist at Databricks, guided the trial’s selection of AI workloads and their flexibility thresholds.

“There’s a certain level of latent flexibility in how AI workloads are typically run,” Frankle said. “Often, a small percentage of jobs are truly non-preemptible, whereas many jobs such as training, batch inference or fine-tuning have different priority levels depending on the user.”

Because Arizona is among the top states for data center growth, SRP set challenging flexibility targets for the AI compute cluster — a 25% power consumption reduction compared with baseline load — in an effort to demonstrate how new data centers can provide meaningful relief to Phoenix’s power grid constraints.

“This test was an opportunity to completely reimagine AI data centers as helpful resources to help us operate the power grid more effectively and reliably,” said David Rousseau, president of SRP.

On May 3, a hot day in Phoenix with high air-conditioning demand, SRP’s system experienced peak demand at 6 p.m. During the test, the data center cluster reduced consumption gradually with a 15-minute ramp down, maintained the 25% power reduction over three hours, then ramped back up without exceeding its original baseline consumption.

AI factory users can label their workloads to guide Emerald’s software on which jobs can be slowed, paused or rescheduled — or, Emerald’s AI agents can make these predictions automatically.

Orchestration decisions were guided by the Emerald Simulator, which accurately models system behavior to optimize trade-offs between energy usage and AI performance. Historical grid demand from data provider Amperon confirmed that the AI cluster performed correctly during the grid’s peak period.

The International Energy Agency projects that electricity demand from data centers globally could more than double by 2030. In light of the anticipated demand on the grid, the state of Texas passed a law that requires data centers to ramp down consumption or disconnect from the grid at utilities’ requests during load shed events.

“In such situations, if data centers are able to dynamically reduce their energy consumption, they might be able to avoid getting kicked off the power supply entirely,” Sivaram said.

Looking ahead, Emerald AI is expanding its technology trials in Arizona and beyond — and it plans to continue working with NVIDIA to test its technology on AI factories.

“We can make data centers controllable while assuring acceptable AI performance,” Sivaram said. “AI factories can flex when the grid is tight — and sprint when users need them to.”

Learn more about NVIDIA Inception and explore AI platforms designed for power and utilities.

]]>This GFN Thursday rolls out a new reward and games for GeForce NOW members. Whether hunting for hot new releases or rediscovering timeless classics, members can always find more ways to play, games to stream and perks to enjoy.

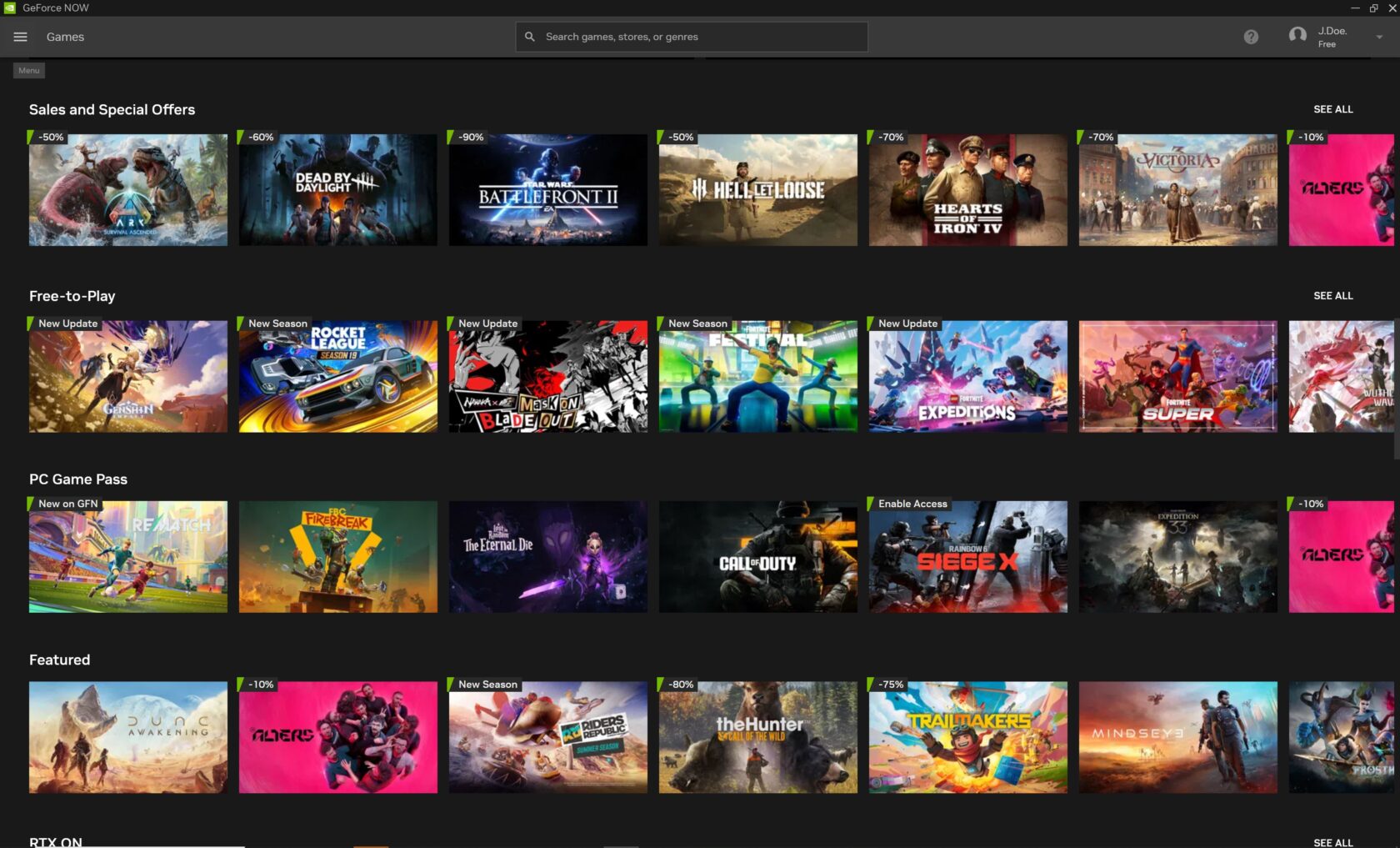

Gamers can score major discounts on the titles they’ve been eyeing — perfect for streaming in the cloud — during the Steam Summer Sale, running until Thursday, July 10, at 10 a.m. PT.

This week also brings unforgettable adventures to the cloud: We Happy Few and Broken Age are part of the five additions to the GeForce NOW library this week.

The fun doesn’t stop there. A new in-game reward for Elder Scrolls Online is now available for members to claim.

And SteelSeries has launched a new mobile controller that transforms phones into cloud gaming devices with GeForce NOW. Add it to the roster of on-the-go gaming devices — including the recently launched GeForce NOW app on Steam Deck for seamless 4K streaming.

GeForce NOW Premium members receive exclusive 24-hour early access to a new mythical reward in The Elder Scrolls Online — Bethesda’s award-winning role-playing game — before it opens to all members. Sharpen the sword, ready the staff and chase glory across the vast, immersive world of Tamriel.

Claim the mythical Grand Gold Coast Experience Scrolls reward, a rare item that grants a bonus of 150% Experience Points from all sources for one hour. The scroll’s effect pauses while players are offline and resumes upon return, ensuring every minute counts. Whether tackling dungeon runs, completing epic quests or leveling a new character, the scrolls provide a powerful edge. Claim the reward, harness its power and scroll into the next adventure.

Members who’ve opted into the GeForce NOW Rewards program can check their emails for redemption instructions. The offer runs through Saturday, July 26, while supplies last. Don’t miss this opportunity to become a legend in Tamriel.

The Steam Summer Sale is in full swing. Snag games at discounted prices and stream them instantly from the cloud — no downloads, no waiting, just pure gaming bliss.

Check out the “Steam Summer Sale” row in the GeForce NOW app to find deals on the next adventure. With GeForce NOW, gaming favorites are always just a click away.

While picking up discounted games, don’t miss the chance to get a GeForce NOW six-month Performance membership at 40% off. This is also the last opportunity to take advantage of the Performance Day Pass sale, ending Friday, June 27 — which lets gamers access cloud gaming for 24 hours — before diving into the 6-month Performance membership.

Two distinct worlds — where secrets simmer and imagination runs wild — are streaming onto the cloud this week.

Step into the surreal, retro-futuristic streets of We Happy Few, where a society obsessed with happiness hides its secrets behind a mask of forced cheer and a haze of “Joy.” This darkly whimsical adventure invites players to blend in, break out and uncover the truth lurking beneath the surface of Wellington Wells.

Broken Age spins a charming, hand-painted tale of two teenagers leading parallel lives in worlds at once strange and familiar. One of the teens yearns to escape a stifling spaceship, and the other is destined to challenge ancient traditions. With witty dialogue and heartfelt moments, Broken Age is a storybook come to life, brimming with quirky characters and clever puzzles.

Each of these unforgettable adventures brings its own flavor — be it dark satire, whimsical wonder or pulse-pounding suspense — offering a taste of gaming at its imaginative peaks. Stream these captivating worlds straight from the cloud and enjoy seamless gameplay, no downloads or high-end hardware required.

Get ready for the SteelSeries Nimbus Cloud, a new dual-mode cloud controller. When paired with GeForce NOW, this new controller reaches new heights.

Designed for versatility and comfort, and crafted specifically for cloud gaming, the SteelSeries Nimbus Cloud effortlessly shifts from a mobile device controller to a full-sized wireless controller, delivering top-notch performance and broad compatibility across devices.

The Nimbus Cloud enables gamers to play wherever they are, as it easily adapts to fit iPhones and Android phones. Or collapse and connect the controller via Bluetooth to a gaming rig or smart TV. Transform any space into a personal gaming station with GeForce NOW and the Nimbus Cloud, part of the list of recommended products for an elevated cloud gaming experience.

System Shock 2: 25th Anniversary Remaster is an overhaul of the acclaimed sci-fi horror classic, rebuilt by Nightdive Studios with enhanced visuals, refined gameplay and features such as cross-play co-op multiplayer. Face the sinister AI SHODAN and her mutant army aboard the starship Von Braun as a cybernetically enhanced soldier with upgradable skills, powerful weapons and psionic abilities. Stream the title from the cloud with GeForce NOW for ultimate flexibility and performance.

Look for the following games available to stream in the cloud this week:

What are you planning to play this weekend? Let us know on X or in the comments below.

]]>The official GFN summer bucket list

Play anywhere

Stream on every screen you own

Finally crush that backlog

Skip every single download bar

Drop the emoji for the one you’re tackling right now

—

NVIDIA GeForce NOW (@NVIDIAGFN) June 25, 2025

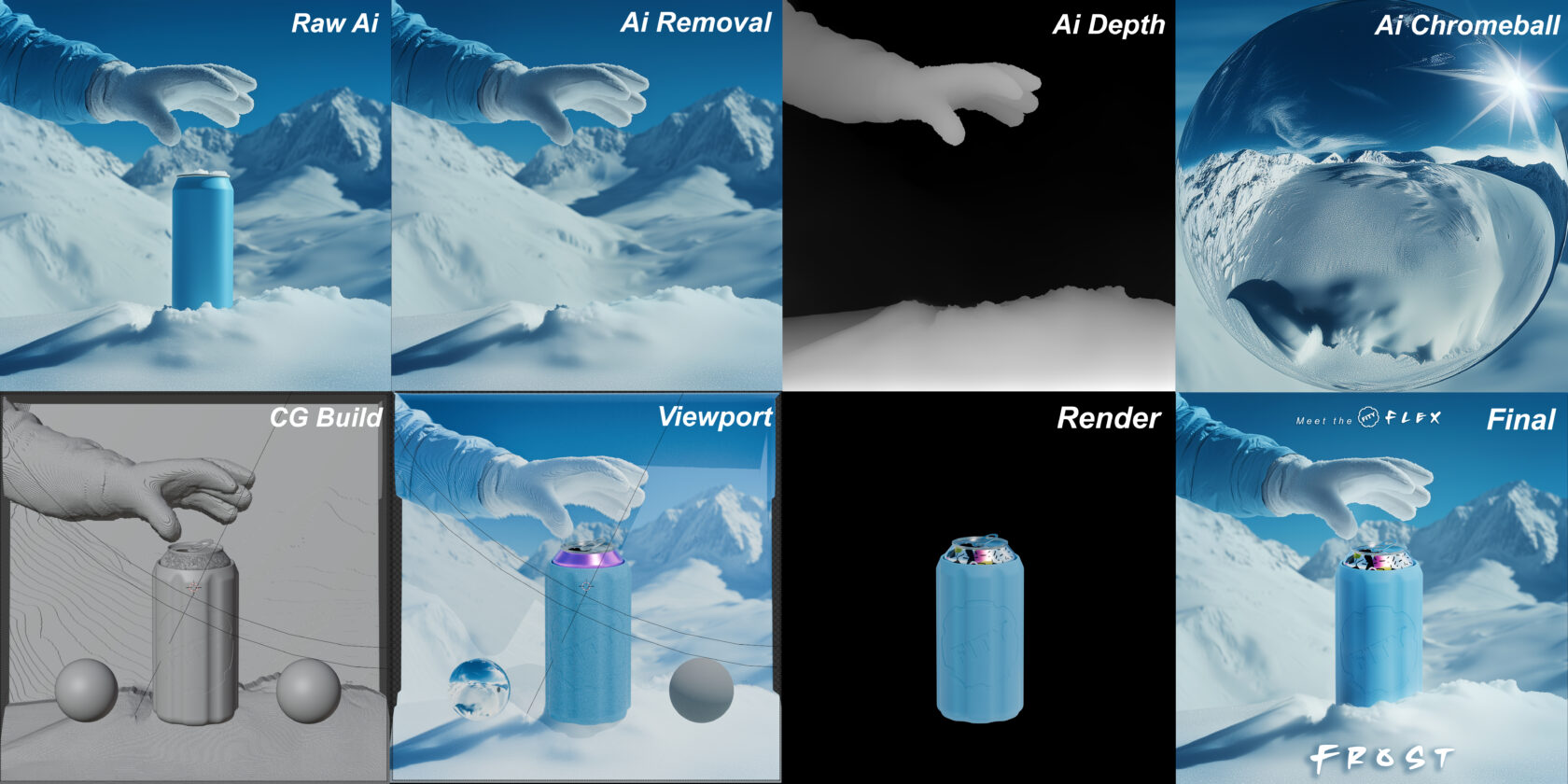

Mark Theriault founded the startup FITY envisioning a line of clever cooling products: cold drink holders that come with freezable pucks to keep beverages cold for longer without the mess of ice. The entrepreneur started with 3D prints of products in his basement, building one unit at a time, before eventually scaling to mass production.

Founding a consumer product company from scratch was a tall order for a single person. Going from preliminary sketches to production-ready designs was a major challenge. To bring his creative vision to life, Theriault relied on AI and his NVIDIA GeForce RTX-equipped system. For him, AI isn’t just a tool — it’s an entire pipeline to help him accomplish his goals. Read more about his workflow below.

Plus, GeForce RTX 5050 laptops start arriving today at retailers worldwide, from $999. GeForce RTX 5050 Laptop GPUs feature 2,560 NVIDIA Blackwell CUDA cores, fifth-generation AI Tensor Cores, fourth-generation RT Cores, a ninth-generation NVENC encoder and a sixth-generation NVDEC decoder.

In addition, NVIDIA’s Plug and Play: Project G-Assist Plug-In Hackathon — running virtually through Wednesday, July 16 — invites developers to explore AI and build custom G-Assist plug-ins for a chance to win prizes. Save the date for the G-Assist Plug-In webinar on Wednesday, July 9, from 10-11 a.m. PT, to learn more about Project G-Assist capabilities and fundamentals, and to participate in a live Q&A session.

To create his standout products, Theriault tinkers with potential FITY Flex cooler designs with traditional methods, from sketch to computer-aided design to rapid prototyping, until he finds the right vision. A unique aspect of the FITY Flex design is that it can be customized with fun, popular shoe charms.

For packaging design inspiration, Theriault uses his preferred text-to-image generative AI model for prototyping, Stable Diffusion XL — which runs 60% faster with the NVIDIA TensorRT software development kit — using the modular, node-based interface ComfyUI.

ComfyUI gives users granular control over every step of the generation process — prompting, sampling, model loading, image conditioning and post-processing. It’s ideal for advanced users like Theriault who want to customize how images are generated.

NVIDIA and GeForce RTX GPUs based on the NVIDIA Blackwell architecture include fifth-generation Tensor Cores designed to accelerate AI and deep learning workloads. These GPUs work with CUDA optimizations in PyTorch to seamlessly accelerate ComfyUI, reducing generation time on FLUX.1-dev, an image generation model from Black Forest Labs, from two minutes per image on the Mac M3 Ultra to about four seconds on the GeForce RTX 5090 desktop GPU.

ComfyUI can also add ControlNets — AI models that help control image generation — that Theriault uses for tasks like guiding human poses, setting compositions via depth mapping and converting scribbles to images.

Theriault even creates his own fine-tuned models to keep his style consistent. He used low-rank adaptation (LoRA) models — small, efficient adapters into specific layers of the network — enabling hyper-customized generation with minimal compute cost.

“Over the last few months, I’ve been shifting from AI-assisted computer graphics renders to fully AI-generated product imagery using a custom Flux LoRA I trained in house. My RTX 4080 SUPER GPU has been essential for getting the performance I need to train and iterate quickly.” – Mark Theriault, founder of FITY

Theriault also taps into generative AI to create marketing assets like FITY Flex product packaging. He uses FLUX.1, which excels at generating legible text within images, addressing a common challenge in text-to-image models.

Though FLUX.1 models can typically consume over 23GB of VRAM, NVIDIA has collaborated with Black Forest Labs to help reduce the size of these models using quantization — a technique that reduces model size while maintaining quality. The models were then accelerated with TensorRT, which provides an up to 2x speedup over PyTorch.

To simplify using these models in ComfyUI, NVIDIA created the FLUX.1 NIM microservice, a containerized version of FLUX.1 that can be loaded in ComfyUI and enables FP4 quantization and TensorRT support. Combined, the models come down to just over 11GB of VRAM, and performance improves by 2.5x.

Theriault uses the Blender Cycles app to render out final files. For 3D workflows, NVIDIA offers the AI Blueprint for 3D-guided generative AI to ease the positioning and composition of 3D images, so anyone interested in this method can quickly get started.

Finally, Theriault uses large language models to generate marketing copy — tailored for search engine optimization, tone and storytelling — as well as to complete his patent and provisional applications, work that usually costs thousands of dollars in legal fees and considerable time.

“As a one-man band with a ton of content to generate, having on-the-fly generation capabilities for my product designs really helps speed things up.” – Mark Theriault, founder of FITY

Every texture, every word, every photo, every accessory was a micro-decision, Theriault said. AI helped him survive the “death by a thousand cuts” that can stall solo startup founders, he added.

Each week, the RTX AI Garage blog series features community-driven AI innovations and content for those looking to learn more about NVIDIA NIM microservices and AI Blueprints, as well as building AI agents, creative workflows, digital humans, productivity apps and more on AI PCs and workstations.

Plug in to NVIDIA AI PC on Facebook, Instagram, TikTok and X — and stay informed by subscribing to the RTX AI PC newsletter.

Follow NVIDIA Workstation on LinkedIn and X.

See notice regarding software product information.

]]>Editor’s note: This blog is a part of Into the Omniverse, a series focused on how developers, 3D practitioners and enterprises can transform their workflows using the latest advances in OpenUSD and NVIDIA Omniverse.

Simulated driving environments enable engineers to safely and efficiently train, test and validate autonomous vehicles (AVs) across countless real-world and edge-case scenarios without the risks and costs of physical testing.

These simulated environments can be created through neural reconstruction of real-world data from AV fleets or generated with world foundation models (WFMs) — neural networks that understand physics and real-world properties. WFMs can be used to generate synthetic datasets for enhanced AV simulation.

To help physical AI developers build such simulated environments, NVIDIA unveiled major advances in WFMs at the GTC Paris and CVPR conferences earlier this month. These new capabilities enhance NVIDIA Cosmos — a platform of generative WFMs, advanced tokenizers, guardrails and accelerated data processing tools.

Key innovations like Cosmos Predict-2, the Cosmos Transfer-1 NVIDIA preview NIM microservice and Cosmos Reason are improving how AV developers generate synthetic data, build realistic simulated environments and validate safety systems at unprecedented scale.

Universal Scene Description (OpenUSD), a unified data framework and standard for physical AI applications, enables seamless integration and interoperability of simulation assets across the development pipeline. OpenUSD standardization plays a critical role in ensuring 3D pipelines are built to scale.

NVIDIA Omniverse, a platform of application programming interfaces, software development kits and services for building OpenUSD-based physical AI applications, enables simulations from WFMs and neural reconstruction at world scale.

Leading AV organizations — including Foretellix, Mcity, Oxa, Parallel Domain, Plus AI and Uber — are among the first to adopt Cosmos models.

Cosmos Predict-2, NVIDIA’s latest WFM, generates high-quality synthetic data by predicting future world states from multimodal inputs like text, images and video. This capability is critical for creating temporally consistent, realistic scenarios that accelerate training and validation of AVs and robots.

In addition, Cosmos Transfer, a control model that adds variations in weather, lighting and terrain to existing scenarios, will soon be available to 150,000 developers on CARLA, a leading open-source AV simulator. This greatly expands the broad AV developer community’s access to advanced AI-powered simulation tools.

Developers can start integrating synthetic data into their own pipelines using the NVIDIA Physical AI Dataset. The latest release includes 40,000 clips generated using Cosmos.

Building on these foundations, the Omniverse Blueprint for AV simulation provides a standardized, API-driven workflow for constructing rich digital twins, replaying real-world sensor data and generating new ground-truth data for closed-loop testing.

The blueprint taps into OpenUSD’s layer-stacking and composition arcs, which enable developers to collaborate asynchronously and modify scenes nondestructively. This helps create modular, reusable scenario variants to efficiently generate different weather conditions, traffic patterns and edge cases.

To bolster the operational safety of AV systems, NVIDIA earlier this year introduced NVIDIA Halos — a comprehensive safety platform that integrates the company’s full automotive hardware and software stack with AI research focused on AV safety.

The new Cosmos models — Cosmos Predict- 2, Cosmos Transfer- 1 NIM and Cosmos Reason — deliver further safety enhancements to the Halos platform, enabling developers to create diverse, controllable and realistic scenarios for training and validating AV systems.

These models, trained on massive multimodal datasets including driving data, amplify the breadth and depth of simulation, allowing for robust scenario coverage — including rare and safety-critical events — while supporting post-training customization for specialized AV tasks.

At CVPR, NVIDIA was recognized as an Autonomous Grand Challenge winner, highlighting its leadership in advancing end-to-end AV workflows. The challenge used OpenUSD’s robust metadata and interoperability to simulate sensor inputs and vehicle trajectories in semi-reactive environments, achieving state-of-the-art results in safety and compliance.

Learn more about how developers are leveraging tools like CARLA, Cosmos, and Omniverse to advance AV simulation in this livestream replay:

Hear NVIDIA Director of Autonomous Vehicle Research Marco Pavone on the NVIDIA AI Podcast share how digital twins and high-fidelity simulation are improving vehicle testing, accelerating development and reducing real-world risks.

Learn more about what’s next for AV simulation with OpenUSD by watching the replay of NVIDIA founder and CEO Jensen Huang’s GTC Paris keynote.

Looking for more live opportunities to learn more about OpenUSD? Don’t miss sessions and labs happening at SIGGRAPH 2025, August 10–14.

Discover why developers and 3D practitioners are using OpenUSD and learn how to optimize 3D workflows with the self-paced “Learn OpenUSD” curriculum for 3D developers and practitioners, available for free through the NVIDIA Deep Learning Institute.

Explore the Alliance for OpenUSD forum and the AOUSD website.

Stay up to date by subscribing to NVIDIA Omniverse news, joining the community and following NVIDIA Omniverse on Instagram, LinkedIn, Medium and X.

]]>